Table of Contents

- HowTo Convert multimedia files between formats using ffmpeg. Posted by Unknown. FFmpeg is a complete, cross-platform solution to record, convert and stream audio and video. It includes libavcodec - the leading audio/video codec library. Video Examples:. Converting MOV to FLV using FFMPEG.

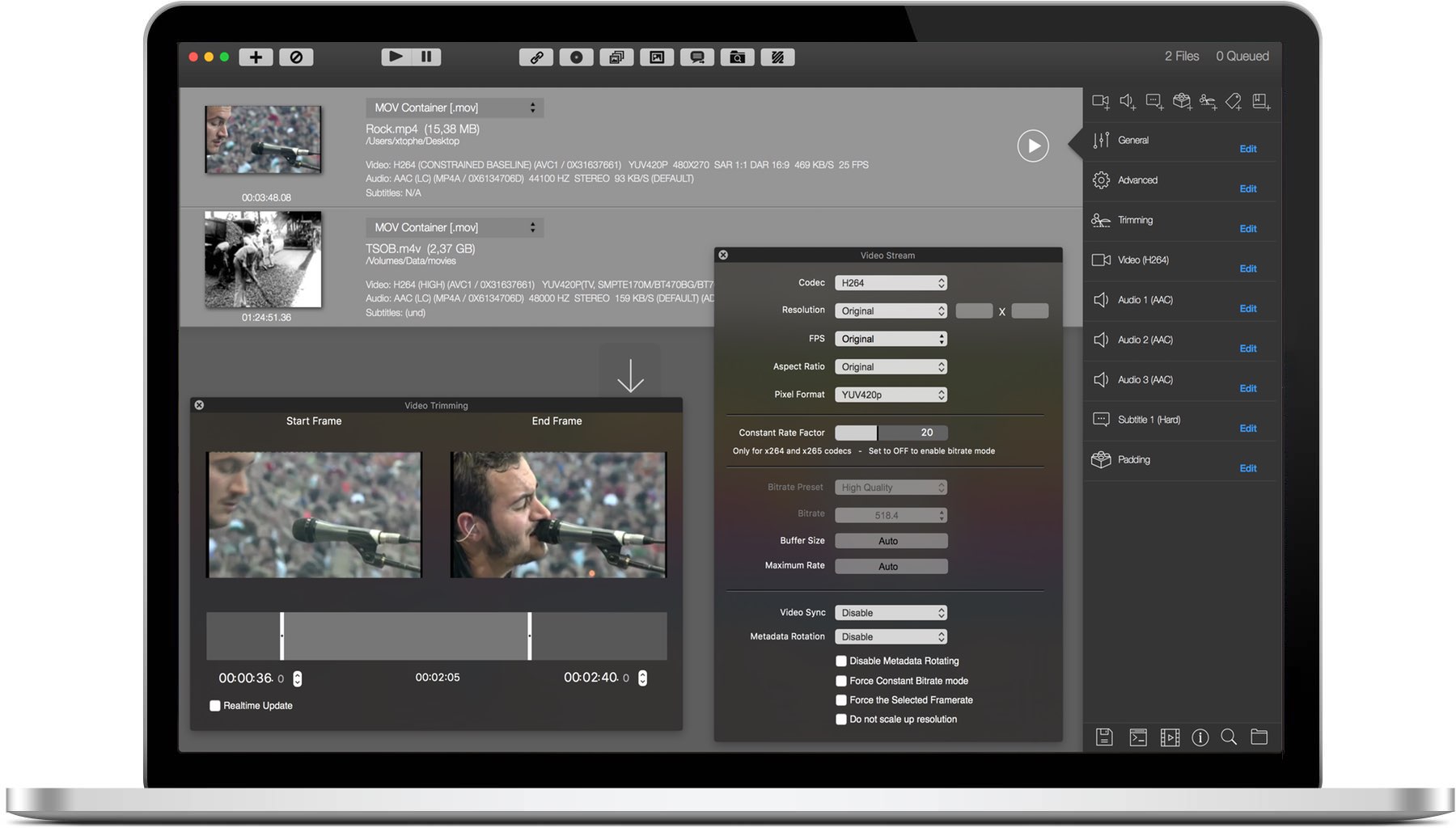

- IFFmpeg 6.1.5 iFFmpeg is a graphical front-end for FFmpeg, a command-line tool used to convert multimedia files between formats. The command line instructions can be very hard to master/understand, so iFFmpeg does all the hard work for you.

- 1 General Questions

- 2 Compilation

- 3 Usage

- 3.14 How can I concatenate video files?

- 4 Development

1 General Questions

FfWorks (formerly known as iFFmpeg) is a straightforward GUI for FFmpeg, the popular command-line utility capable to convert multimedia files between various formats. If you enjoy the conversion power and versatility of the FFmpeg but you also want a user-friendly interface that allows.

1.1 Why doesn't FFmpeg support feature [xyz]?

Because no one has taken on that task yet. FFmpeg development isdriven by the tasks that are important to the individual developers.If there is a feature that is important to you, the best way to getit implemented is to undertake the task yourself or sponsor a developer.

1.2 FFmpeg does not support codec XXX. Can you include a Windows DLL loader to support it?

No. Windows DLLs are not portable, bloated and often slow.Moreover FFmpeg strives to support all codecs natively.A DLL loader is not conducive to that goal.

1.3 I cannot read this file although this format seems to be supported by ffmpeg.

Even if ffmpeg can read the container format, it may not support all itscodecs. Please consult the supported codec list in the ffmpegdocumentation.

1.4 Which codecs are supported by Windows?

Windows does not support standard formats like MPEG very well, unless youinstall some additional codecs.

The following list of video codecs should work on most Windows systems:

.avi/.asf

Iffmpeg 5 4 1 – Convert Multimedia Files Between Formats Online

.asf only

.asf only

.asf only

Only if you have some MPEG-4 codec like ffdshow or Xvid installed.

.mpg only

Note, ASF files often have .wmv or .wma extensions in Windows. It should alsobe mentioned that Microsoft claims a patent on the ASF format, and may sueor threaten users who create ASF files with non-Microsoft software. It isstrongly advised to avoid ASF where possible.

The following list of audio codecs should work on most Windows systems:

always

If some MP3 codec like LAME is installed.

2 Compilation

2.1 error: can't find a register in class 'GENERAL_REGS' while reloading 'asm'

This is a bug in gcc. Do not report it to us. Instead, please report it tothe gcc developers. Note that we will not add workarounds for gcc bugs.

Also note that (some of) the gcc developers believe this is not a bug ornot a bug they should fix:https://gcc.gnu.org/bugzilla/show_bug.cgi?id=11203.Then again, some of them do not know the difference between an undecidableproblem and an NP-hard problem..

2.2 I have installed this library with my distro's package manager. Why does configure not see it?

Distributions usually split libraries in several packages. The main packagecontains the files necessary to run programs using the library. Thedevelopment package contains the files necessary to build programs using thelibrary. Sometimes, docs and/or data are in a separate package too.

To build FFmpeg, you need to install the development package. It is usuallycalled libfoo-dev or libfoo-devel. You can remove it after thebuild is finished, but be sure to keep the main package.

2.3 How do I make pkg-config find my libraries?

Somewhere along with your libraries, there is a .pc file (or several)in a pkgconfig directory. You need to set environment variables topoint pkg-config to these files.

If you need to add directories to pkg-config's search list(typical use case: library installed separately), add it to$PKG_CONFIG_PATH:

If you need to replacepkg-config's search list(typical use case: cross-compiling), set it in$PKG_CONFIG_LIBDIR:

If you need to know the library's internal dependencies (typical use: staticlinking), add the --static option to pkg-config: Anymp4 audio converter 8 2 12 minutes.

2.4 How do I use pkg-config when cross-compiling?

always

If some MP3 codec like LAME is installed.

2 Compilation

2.1 error: can't find a register in class 'GENERAL_REGS' while reloading 'asm'

This is a bug in gcc. Do not report it to us. Instead, please report it tothe gcc developers. Note that we will not add workarounds for gcc bugs.

Also note that (some of) the gcc developers believe this is not a bug ornot a bug they should fix:https://gcc.gnu.org/bugzilla/show_bug.cgi?id=11203.Then again, some of them do not know the difference between an undecidableproblem and an NP-hard problem..

2.2 I have installed this library with my distro's package manager. Why does configure not see it?

Distributions usually split libraries in several packages. The main packagecontains the files necessary to run programs using the library. Thedevelopment package contains the files necessary to build programs using thelibrary. Sometimes, docs and/or data are in a separate package too.

To build FFmpeg, you need to install the development package. It is usuallycalled libfoo-dev or libfoo-devel. You can remove it after thebuild is finished, but be sure to keep the main package.

2.3 How do I make pkg-config find my libraries?

Somewhere along with your libraries, there is a .pc file (or several)in a pkgconfig directory. You need to set environment variables topoint pkg-config to these files.

If you need to add directories to pkg-config's search list(typical use case: library installed separately), add it to$PKG_CONFIG_PATH:

If you need to replacepkg-config's search list(typical use case: cross-compiling), set it in$PKG_CONFIG_LIBDIR:

If you need to know the library's internal dependencies (typical use: staticlinking), add the --static option to pkg-config: Anymp4 audio converter 8 2 12 minutes.

2.4 How do I use pkg-config when cross-compiling?

The best way is to install pkg-config in your cross-compilationenvironment. It will automatically use the cross-compilation libraries.

You can also use pkg-config from the host environment byspecifying explicitly --pkg-config=pkg-config to configure.In that case, you must point pkg-config to the correct directoriesusing the PKG_CONFIG_LIBDIR, as explained in the previous entry.

As an intermediate solution, you can place in your cross-compilationenvironment a script that calls the host pkg-config withPKG_CONFIG_LIBDIR set. That script can look like that:

3 Usage

3.1 ffmpeg does not work; what is wrong?

Try a make distclean in the ffmpeg source directory before the build.If this does not help see(https://ffmpeg.org/bugreports.html).

3.2 How do I encode single pictures into movies?

First, rename your pictures to follow a numerical sequence.For example, img1.jpg, img2.jpg, img3.jpg,..Then you may run:

Notice that ‘%d' is replaced by the image number.

img%03d.jpg means the sequence img001.jpg, img002.jpg, etc.

Use the -start_number option to declare a starting number forthe sequence. This is useful if your sequence does not start withimg001.jpg but is still in a numerical order. The followingexample will start with img100.jpg:

If you have large number of pictures to rename, you can use thefollowing command to ease the burden. The command, using the bourneshell syntax, symbolically links all files in the current directorythat match *jpg to the /tmp directory in the sequence ofimg001.jpg, img002.jpg and so on.

If you want to sequence them by oldest modified first, substitute$(ls -r -t *jpg) in place of *jpg.

Then run:

The same logic is used for any image format that ffmpeg reads.

You can also use cat to pipe images to ffmpeg:

3.3 How do I encode movie to single pictures?

Use:

The movie.mpg used as input will be converted tomovie1.jpg, movie2.jpg, etc..

Instead of relying on file format self-recognition, you may also use

- -c:v ppm

- -c:v png

- -c:v mjpeg

to force the encoding.

Applying that to the previous example:

Beware that there is no 'jpeg' codec. Use 'mjpeg' instead.

3.4 Why do I see a slight quality degradation with multithreaded MPEG* encoding?

For multithreaded MPEG* encoding, the encoded slices must be independent,otherwise thread n would practically have to wait for n-1 to finish, so it'squite logical that there is a small reduction of quality. This is not a bug.

3.5 How can I read from the standard input or write to the standard output?

Use - as file name.

3.6 -f jpeg doesn't work.

Try '-f image2 test%d.jpg'.

3.7 Why can I not change the frame rate?

Some codecs, like MPEG-1/2, only allow a small number of fixed frame rates.Choose a different codec with the -c:v command line option.

3.8 How do I encode Xvid or DivX video with ffmpeg?

Both Xvid and DivX (version 4+) are implementations of the ISO MPEG-4standard (note that there are many other coding formats that use thissame standard). Thus, use '-c:v mpeg4' to encode in these formats. Thedefault fourcc stored in an MPEG-4-coded file will be 'FMP4'. If you wanta different fourcc, use the '-vtag' option. E.g., '-vtag xvid' willforce the fourcc 'xvid' to be stored as the video fourcc rather than thedefault.

3.9 Which are good parameters for encoding high quality MPEG-4?

'-mbd rd -flags +mv4+aic -trellis 2 -cmp 2 -subcmp 2 -g 300 -pass 1/2',things to try: '-bf 2', '-mpv_flags qp_rd', '-mpv_flags mv0', '-mpv_flags skip_rd'.

3.10 Which are good parameters for encoding high quality MPEG-1/MPEG-2?

'-mbd rd -trellis 2 -cmp 2 -subcmp 2 -g 100 -pass 1/2'but beware the '-g 100' might cause problems with some decoders.Things to try: '-bf 2', '-mpv_flags qp_rd', '-mpv_flags mv0', '-mpv_flags skip_rd'.

3.11 Interlaced video looks very bad when encoded with ffmpeg, what is wrong?

You should use '-flags +ilme+ildct' and maybe '-flags +alt' for interlacedmaterial, and try '-top 0/1' if the result looks really messed-up.

3.12 How can I read DirectShow files?

If you have built FFmpeg with ./configure --enable-avisynth(only possible on MinGW/Cygwin platforms),then you may use any file that DirectShow can read as input.

Just create an 'input.avs' text file with this single line ..

.. and then feed that text file to ffmpeg:

For ANY other help on AviSynth, please visit theAviSynth homepage.

3.13 How can I join video files?

To 'join' video files is quite ambiguous. The following list explains thedifferent kinds of 'joining' and points out how those are addressed inFFmpeg. To join video files may mean:

- To put them one after the other: this is called to concatenate them(in short: concat) and is addressedin this very faq.

- To put them together in the same file, to let the user choose between thedifferent versions (example: different audio languages): this is called tomultiplex them together (in short: mux), and is done by simplyinvoking ffmpeg with several -i options.

- For audio, to put all channels together in a single stream (example: twomono streams into one stereo stream): this is sometimes called tomerge them, and can be done using the

amergefilter. - For audio, to play one on top of the other: this is called to mixthem, and can be done by first merging them into a single stream and thenusing the

panfilter to mixthe channels at will. - For video, to display both together, side by side or one on top of a part ofthe other; it can be done using the

overlayvideo filter.

3.14 How can I concatenate video files?

There are several solutions, depending on the exact circumstances.

3.14.1 Concatenating using the concat filter

FFmpeg has a concat filter designed specifically for that, with examples in thedocumentation. This operation is recommended if you need to re-encode.

3.14.2 Concatenating using the concat demuxer

FFmpeg has a concat demuxer which you can use when you want to avoid a re-encode andyour format doesn't support file level concatenation.

3.14.3 Concatenating using the concat protocol (file level)

FFmpeg has a concat protocol designed specifically for that, with examples in thedocumentation.

A few multimedia containers (MPEG-1, MPEG-2 PS, DV) allow one to concatenatevideo by merely concatenating the files containing them.

Hence you may concatenate your multimedia files by first transcoding them tothese privileged formats, then using the humble cat command (or theequally humble copy under Windows), and finally transcoding back to yourformat of choice.

Additionally, you can use the concat protocol instead of cat orcopy which will avoid creation of a potentially huge intermediate file.

Note that you may need to escape the character '|' which is special for manyshells.

Another option is usage of named pipes, should your platform support it:

3.14.4 Concatenating using raw audio and video

Similarly, the yuv4mpegpipe format, and the raw video, raw audio codecs alsoallow concatenation, and the transcoding step is almost lossless.When using multiple yuv4mpegpipe(s), the first line needs to be discardedfrom all but the first stream. This can be accomplished by piping throughtail as seen below. Note that when piping through tail youmust use command grouping, { ;}, to background properly.

For example, let's say we want to concatenate two FLV files into anoutput.flv file:

3.15 Using -f lavfi, audio becomes mono for no apparent reason.

Use -dumpgraph - to find out exactly where the channel layout islost.

Most likely, it is through auto-inserted aresample. Try to understandwhy the converting filter was needed at that place.

Just before the output is a likely place, as -f lavfi currentlyonly support packed S16.

Then insert the correct aformat explicitly in the filtergraph,specifying the exact format.

3.16 Why does FFmpeg not see the subtitles in my VOB file?

VOB and a few other formats do not have a global header that describeseverything present in the file. Instead, applications are supposed to scanthe file to see what it contains. Since VOB files are frequently large, onlythe beginning is scanned. If the subtitles happen only later in the file,they will not be initially detected.

Some applications, including the ffmpeg command-line tool, can onlywork with streams that were detected during the initial scan; streams thatare detected later are ignored.

The size of the initial scan is controlled by two options: probesize(default ~5 Mo) and analyzeduration (default 5,000,000 µs = 5 s). Forthe subtitle stream to be detected, both values must be large enough.

3.17 Why was the ffmpeg-sameq option removed? What to use instead?

The -sameq option meant 'same quantizer', and made sense only in avery limited set of cases. Unfortunately, a lot of people mistook it for'same quality' and used it in places where it did not make sense: it hadroughly the expected visible effect, but achieved it in a very inefficientway.

Each encoder has its own set of options to set the quality-vs-size balance,use the options for the encoder you are using to set the quality level to apoint acceptable for your tastes. The most common options to do that are-qscale and -qmax, but you should peruse the documentationof the encoder you chose.

3.18 I have a stretched video, why does scaling does not fix it?

A lot of video codecs and formats can store the aspect ratio of thevideo: this is the ratio between the width and the height of either the fullimage (DAR, display aspect ratio) or individual pixels (SAR, sample aspectratio). For example, EGA screens at resolution 640×350 had 4:3 DAR and 35:48SAR.

Most still image processing work with square pixels, i.e. 1:1 SAR, but a lotof video standards, especially from the analogic-numeric transition era, usenon-square pixels.

Most processing filters in FFmpeg handle the aspect ratio to avoidstretching the image: cropping adjusts the DAR to keep the SAR constant,scaling adjusts the SAR to keep the DAR constant.

If you want to stretch, or 'unstretch', the image, you need to override theinformation with thesetdar or setsar filters.

Do not forget to examine carefully the original video to check whether thestretching comes from the image or from the aspect ratio information.

For example, to fix a badly encoded EGA capture, use the following commands,either the first one to upscale to square pixels or the second one to setthe correct aspect ratio or the third one to avoid transcoding (may not workdepending on the format / codec / player / phase of the moon):

3.19 How do I run ffmpeg as a background task?

ffmpeg normally checks the console input, for entries like 'q' to stopand '?' to give help, while performing operations. ffmpeg does not have a way ofdetecting when it is running as a background task.When it checks the console input, that can cause the process running ffmpegin the background to suspend.

To prevent those input checks, allowing ffmpeg to run as a background task,use the -nostdin optionin the ffmpeg invocation. This is effective whether you run ffmpeg in a shellor invoke ffmpeg in its own process via an operating system API.

As an alternative, when you are running ffmpeg in a shell, you can redirectstandard input to /dev/null (on Linux and macOS)or NUL (on Windows). You can do this redirect eitheron the ffmpeg invocation, or from a shell script which calls ffmpeg.

For example:

or (on Linux, macOS, and other UNIX-like shells):

or (on Windows):

3.20 How do I prevent ffmpeg from suspending with a message like suspended (tty output)?

If you run ffmpeg in the background, you may find that its process suspends.There may be a message like suspended (tty output). The question is howto prevent the process from being suspended.

For example:

The message 'tty output' notwithstanding, the problem here is thatffmpeg normally checks the console input when it runs. The operating systemdetects this, and suspends the process until you can bring it to theforeground and attend to it.

The solution is to use the right techniques to tell ffmpeg not to consultconsole input. You can use the-nostdin option,or redirect standard input with < /dev/null.See FAQHow do I run ffmpeg as a background task?for details.

4 Development

4.1 Are there examples illustrating how to use the FFmpeg libraries, particularly libavcodec and libavformat?

Yes. Check the doc/examples directory in the sourcerepository, also available online at:https://github.com/FFmpeg/FFmpeg/tree/master/doc/examples.

Examples are also installed by default, usually in$PREFIX/share/ffmpeg/examples.

Also you may read the Developers Guide of the FFmpeg documentation. Alternatively,examine the source code for one of the many open source projects thatalready incorporate FFmpeg at (projects.html).

4.2 Can you support my C compiler XXX?

It depends. If your compiler is C99-compliant, then patches to supportit are likely to be welcome if they do not pollute the source codewith #ifdefs related to the compiler.

4.3 Is Microsoft Visual C++ supported?

Yes. Please see the Microsoft Visual C++section in the FFmpeg documentation.

4.4 Can you add automake, libtool or autoconf support?

No. These tools are too bloated and they complicate the build.

4.5 Why not rewrite FFmpeg in object-oriented C++?

FFmpeg is already organized in a highly modular manner and does not need tobe rewritten in a formal object language. Further, many of the developersfavor straight C; it works for them. For more arguments on this matter,read 'Programming Religion'.

4.6 Why are the ffmpeg programs devoid of debugging symbols?

The build process creates ffmpeg_g, ffplay_g, etc. whichcontain full debug information. Those binaries are stripped to createffmpeg, ffplay, etc. If you need the debug information, usethe *_g versions.

4.7 I do not like the LGPL, can I contribute code under the GPL instead?

Yes, as long as the code is optional and can easily and cleanly be placedunder #if CONFIG_GPL without breaking anything. So, for example, a new codecor filter would be OK under GPL while a bug fix to LGPL code would not.

4.8 I'm using FFmpeg from within my C application but the linker complains about missing symbols from the libraries themselves.

FFmpeg builds static libraries by default. In static libraries, dependenciesare not handled. That has two consequences. First, you must specify thelibraries in dependency order: -lavdevice must come before-lavformat, -lavutil must come after everything else, etc.Second, external libraries that are used in FFmpeg have to be specified too.

An easy way to get the full list of required libraries in dependency orderis to use pkg-config.

See doc/example/Makefile and doc/example/pc-uninstalled formore details.

4.9 I'm using FFmpeg from within my C++ application but the linker complains about missing symbols which seem to be available.

FFmpeg is a pure C project, so to use the libraries within your C++ applicationyou need to explicitly state that you are using a C library. You can do this byencompassing your FFmpeg includes using extern 'C'.

See http://www.parashift.com/c++-faq-lite/mixing-c-and-cpp.html#faq-32.3

4.10 I'm using libavutil from within my C++ application but the compiler complains about 'UINT64_C' was not declared in this scope

FFmpeg is a pure C project using C99 math features, in order to enable C++to use them you have to append -D__STDC_CONSTANT_MACROS to your CXXFLAGS

4.11 I have a file in memory / a API different from *open/*read/ libc how do I use it with libavformat?

You have to create a custom AVIOContext using avio_alloc_context,see libavformat/aviobuf.c in FFmpeg and libmpdemux/demux_lavf.c Eon 2 7 – simple and elegant time tracking package. in MPlayer or MPlayer2 sources.

4.12 Where is the documentation about ffv1, msmpeg4, asv1, 4xm?

see https://www.ffmpeg.org/~michael/

4.13 How do I feed H.263-RTP (and other codecs in RTP) to libavcodec?

Even if peculiar since it is network oriented, RTP is a container like anyother. You have to demux RTP before feeding the payload to libavcodec.In this specific case please look at RFC 4629 to see how it should be done.

4.14 AVStream.r_frame_rate is wrong, it is much larger than the frame rate.

Iffmpeg 5 4 1 – Convert Multimedia Files Between Formats Software

r_frame_rate is NOT the average frame rate, it is the smallest frame ratethat can accurately represent all timestamps. So no, it is notwrong if it is larger than the average!For example, if you have mixed 25 and 30 fps content, then r_frame_ratewill be 150 (it is the least common multiple).If you are looking for the average frame rate, see AVStream.avg_frame_rate.

4.15 Why is make fate not running all tests?

Make sure you have the fate-suite samples and the SAMPLES Make variableor FATE_SAMPLES environment variable or the --samplesconfigure option is set to the right path.

4.16 Why is make fate not finding the samples?

Do you happen to have a ~ character in the samples path to indicate ahome directory? The value is used in ways where the shell cannot expand it,causing FATE to not find files. Just replace ~ by the full path.

This document was generated on October 24, 2020 using makeinfo.

Hosting provided by telepoint.bg

Iffmpeg 5 4 1 – Convert Multimedia Files Between Formats Free

Convert multimedia files between formats.

iFFmpeg is a comprehensive media tool to convert movie, audio and media files between formats. The FFmpeg command line instructions can be very hard to master/understand, so iFFmpeg does all the hard work for you. This allows you to use FFmpeg without detailed command-line knowledge.

- Now works on APFS formated volumes (macOS10.13 High Sierra)

- Added video filter ‘Frame Steps'

- Added option 'Select one frame every N-th frame'

- Added x265 option 'Strength of adaptive offsets'

- Added'SMPTE ST 428-1' to Color Primaries selector

- Improved FFmpeg error handling

- Improved handling of DVB_Subtitles

- Improved compiling FFmpeg command lines in general

- Now handels DPX frames

- Metadata types ‘Service Name' and ‘Service Provider' is now saved in user presets

- Better compatibility with FFmpeg 3.3 and higher

- Updated several iDevice presets

- Encoding to Prores and enabling qscale is now processed correctly

- Fixed issue when enabling ‘Split Audio Channels into seperate files' (Now always encoded to mono channels)

- Fixed issue setting the mapping when adding 2 or more audio streams and external subtitles files

- Fixed issue adding watermark images that are bigger than the movie size

- Fixed issue exporting to jpg images when ‘x Frames' is selected

- Fixed issue checking for the latest FFmpeg build

- Fixed issue rendering Timecode when using very small fonts sizes

- Fixed issue encoding with 2 pass enabled

- OS X 10.7.3 or later

- Working FFmpeg OS X binary (see Related Links)